Part 2 of 5. ← Part 1: Why One Metric Isn't Enough · Part 3: Blocking → · Series index

Part 1 covered the problem of signal — needing multiple similarity measures to capture the different ways real data varies. This post covers the opposite problem: noise. Tokens that carry no discriminating information, but that your matching system wastes compute on and — worse — lets corrupt its scores.

This is a subtler problem than choosing the right algorithm. It doesn't show up in toy examples because toy examples don't have the systematic noise patterns that production data does. It shows up when you're matching company names across two enterprise systems and you realize that 40% of your score is being driven by whether both records contain the word "Limited."

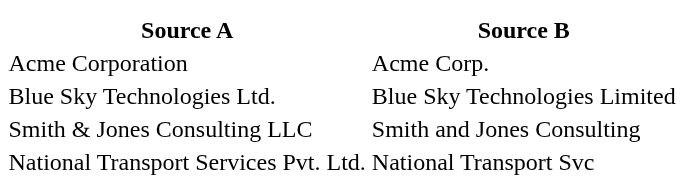

Consider matching company names. Your two datasets contain:

The meaningful token in every row is the company-specific name: "Acme", "Blue Sky Technologies", "Smith & Jones Consulting", "National Transport". Everything else — "Corporation", "Corp.", "Ltd.", "Limited", "LLC", "Pvt.", "Services", "Svc" — is either a legal suffix, an industry classification, or a generic word that appears in thousands of company names.

When you apply a string similarity metric to the full company name, these high-frequency tokens dominate the comparison. "Corporation" vs "Corp." looks like a significant difference at the character level. "Limited" vs "Ltd." is a distance of 4. "Services" vs "Svc" is a distance of 6. These differences pull your similarity scores down and push genuinely matching companies toward non-match territory.

The same dynamic appears across entity types:

In each case, the non-discriminating tokens aren't just useless — they're actively harmful, because they create apparent differences between records that are actually the same entity.

The naive fix is to write preprocessing rules: strip legal suffixes, normalize common abbreviations, lowercase everything. This works for the cases you anticipate. It fails for the cases you don't.

Every dataset has its own vocabulary of noise. A healthcare dataset has medical credential abbreviations. A financial dataset has regulatory entity type suffixes ("N.A.", "F.S.B.", "FDIC-insured"). A retail dataset might have category prefixes or SKU formatting conventions. A government dataset might have agency codes and departmental boilerplate.

You can write rules for the patterns you recognize. But production data contains patterns you've never seen — especially when you're matching across two systems built by different teams, in different eras, with different conventions. The noise you don't anticipate is the noise that costs you recall.

What you need is a data-driven way to identify which tokens in your specific dataset are high-frequency and non-discriminating — and then configure your matching system to ignore them.

The concept of stopwords comes from information retrieval: words that appear so frequently in a corpus that they carry no distinguishing signal. "The", "and", "of" are classic stopwords in document search. The same principle applies to entity matching, but the relevant stopwords are domain-specific.

Zingg's recommend phase automates stopword detection for your data. Run it against a column, and it extracts the top 10% most frequent unique tokens — the ones appearing so often that they can't be meaningfully discriminating:

./scripts/zingg.sh --phase recommend \ --conf conf.json \ --column companyNameThe output is a CSV of candidate stopwords with their frequencies, stored at models/100/stopWords/companyName. You review the list — it takes a few minutes — then configure it per field:{ "fieldName": "companyName", "matchType": "FUZZY", "stopWords": "models/100/stopWords/companyName.csv"}The stopWordsCutoff property lets you adjust the threshold if 10% is too aggressive or too conservative for your data:{ "fieldName": "companyName", "matchType": "FUZZY", "stopWords": "models/100/stopWords/companyName.csv", "stopWordsCutoff": 0.05}

In practice, this single change can produce a measurable improvement in precision and recall for company name and address matching — more than any threshold tuning after the fact, because it removes the noise before the comparison rather than trying to compensate for it in the score.

Stopwords address tokens that appear too often to be useful. Normalization addresses the related problem of tokens that mean the same thing but are spelled differently across your sources.

"Street" and "St." are the same thing. "Corp." and "Corporation" and "Corp" are the same thing. "United States" and "USA" and "US" are the same thing. If you compare these before normalization, your similarity metric will penalize the difference. If you normalize them first, the comparison becomes exact.

Zingg's ONLY_ALPHABETS_FUZZY match type addresses a specific case of this: stripping numeric characters from a field before comparison. For address lines, this means the street number doesn't contaminate the street name comparison — "123 Main St" and "456 Main St" correctly score as similar addresses (same street name) when the numeric component is handled separately via a NUMERIC field for the number alone.

For broader normalization needs, Zingg's standardize postprocessor lets you define pre-match transformations: lowercase, trim whitespace, expand abbreviations, or apply any custom transformation before the matching logic runs. This is the right place to apply domain-specific normalization rules you already know about — before the model sees the data.

Beyond statistical stopwords and normalization rules, some noise is structural — known variations that come from the nature of the domain rather than from data quality problems.

Company name abbreviations are a classic example: "IBM" and "International Business Machines" are the same company, but no string algorithm will tell you that. "Pvt" and "Private" and "Prv" are all the same legal suffix, but they're not phonetically related and they're too short for character-level metrics to be reliable.

This is what the MAPPING match type handles. You define a lookup file mapping known variants to a canonical form, and Zingg uses it during comparison:

{ "fieldName": "companyName", "matchType": "MAPPING_(company_abbreviations.csv)"}A company_abbreviations.csv might contain:IBM, International Business MachinesInt. Biz. Machine, International Business MachinesPvt, PrivatePrv, PrivateCorp, CorporationCorp., Corporation

The effect is that matches that would fail at the character level — because the token overlap is too low — become matchable once the canonical forms are compared. This is particularly important for short strings where small differences have an outsized effect on similarity scores.

As noted in Part 1, MAPPING is part of Zingg Enterprise. The same mechanism that handles company abbreviations also handles personal name nicknames — "Bob" → "Robert", "Bill" → "William" — turning what would otherwise be a zero-overlap non-match into a straightforward comparison.

The practical approach is layered, applied in order before any similarity computation:

Layer 1: Normalization — case normalization, whitespace trimming, known abbreviation expansion, character encoding standardization. Apply these in the standardize postprocessor or in your upstream pipeline. These are the things you know about.

Layer 2: Stopword removal — data-driven identification and removal of high-frequency non-discriminating tokens. Apply per field using the recommend phase output. This catches the noise you didn't anticipate.

Layer 3: Domain mapping — lookup-table-based expansion of known abbreviations and variants. Apply via the MAPPING match type. This handles the structural domain knowledge that algorithms can't learn from character patterns.

Layer 4: Field-appropriate comparison — apply the right match type to each field, as covered in Part 1. Once the above layers have cleaned the data, the similarity comparison is working on signal, not noise.

The order matters. Normalization before stopword detection produces a cleaner stopword list — because "Ltd." and "ltd" and "LTD" will collapse to one token, preventing the same noise from appearing under three entries. Stopword removal before comparison prevents noise from diluting similarity scores. Domain mapping before comparison enables matches that algorithms alone can never make.

To make this concrete: consider a dataset of 500,000 company records across two systems, where legal suffixes like "Corp.", "Inc.", "Ltd.", and "LLC" appear in roughly 60% of records.

Without stopword handling, a fuzzy match on the full company name string is effectively comparing "CompanyName + LegalSuffix" against "CompanyName + LegalSuffix". Since the legal suffixes vary independently of the company name (one system might always use "Inc.", the other might abbreviate it), they act as a source of noise that reduces similarity scores across the board. The result is a lower-than-expected recall: genuine matches that score below threshold because their legal suffixes happened to differ.

With stopword removal, the comparison collapses to the company name itself. The legal suffix variation is filtered before scoring, and recall improves without any change to the matching algorithm or threshold.

This pattern — clean the signal before measuring similarity — is the most high-return-per-effort improvement available in most real-world fuzzy matching pipelines. It's also the one most frequently skipped, because it requires domain knowledge about your data rather than algorithmic tuning.

Up next: Part 3 — Blocking: Making Billion-Record Matching Tractable

← Part 1: Why One Metric Isn't Enough · Series index