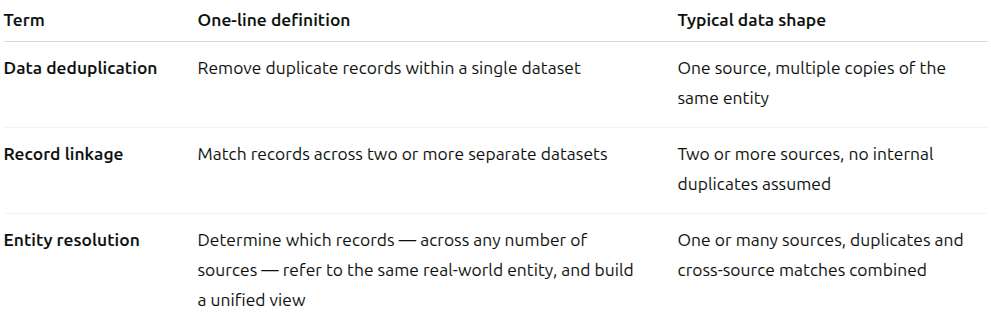

The terminology is messy. The problems are related but distinct. Here's a plain-language map.

If you work in data engineering, you've almost certainly used these terms interchangeably at some point — or watched someone else do it in a Jira ticket, a vendor demo, or a team discussion. "We need to deduplicate the CRM." "Can we do record linkage across these two systems?" "I think what we actually need is entity resolution."

They're all pointing at similar things, but they're not the same thing — and using the wrong term in the wrong context leads to scoping the wrong solution. This post draws the lines clearly.

Entity resolution is the superset. Deduplication and record linkage are specific instances of it.

The problem it solves: You have a single dataset — a CRM export, a customer table, a supplier list — and it contains multiple rows for the same real-world entity. You want to collapse them into one canonical record.

Classic scenario: Your marketing team has been importing leads from trade shows for three years. The same person appears 14 times with slightly different names, email addresses, and job titles. Before you send the next campaign, you need to find and merge those duplicates.

What makes it hard: Exact matches are easy; it's the near-matches that cause problems. "Jon Smith, j.smith@acme.com" and "John Smith, john.smith@acme.com" might be the same person — or two different people at the same company. You can't know without comparing multiple fields together, and even then, you're dealing with probabilities, not certainties.

In Zingg: The match phase handles deduplication within a single source. The match phase also handles entity resolution, but we will come to that later. Zingg learns a blocking model and a similarity model from a small set of labeled examples. The blocking model narrows down candidate pairs (Zingg typically evaluates 0.05–1% of the total possible comparison space), and the similarity model scores each pair. Records that score above a threshold are grouped into the same z_cluster. You configure how each field participates using match types — FUZZY for names with typos, EMAIL to match only the local part of an address, EXACT for country codes or other categorical fields that shouldn't vary.

{ "fieldName": "firstName", "matchType": "FUZZY" }, { "fieldName": "email", "matchType": "EMAIL" }, { "fieldName": "country", "matchType": "EXACT" }

The problem it solves: You have two (or more) separate datasets that are each internally clean — no duplicates within either — but you need to find the records across them that refer to the same entity.

Classic scenario: Your CRM holds customer profiles. Your billing system holds account records. They were built by different teams, use different ID schemes, and have never been formally joined. You need to match a CRM contact to a billing account so you can build a unified view of each customer.

What makes it different from deduplication: The assumption changes. In deduplication, you're looking for redundancy within one source and eliminating it. In record linkage, both sources are authoritative — you're not removing anything, you're establishing a correspondence between them. The output is typically a mapping table: record A in source 1 corresponds to record B in source 2.

What makes it hard: Each source likely has different fields, different formats, different conventions, and different data quality characteristics. The CRM might store full names; billing might have first and last in separate fields. Phone numbers in one system might include country codes; in the other they might not. Every cross-source difference you encounter requires a matching decision.

In Zingg: The link phase is built for this. It takes records from one source and matches each of them against all records in the other source(s). Each matched pair is assigned the same z_cluster id, and the z_source column in the output tells you which source each record came from:

./zingg.sh --phase link --conf configLink.json

The key difference from match is directionality: link assumes the individual sources are already clean, so it doesn't look for duplicates within a source — only across sources. This matters both for correctness and for performance.

The problem it solves: The full, general problem — determining which records, across any combination of sources with any degree of internal inconsistency, refer to the same real-world entity, and producing a reliable, unified view of each entity.

Classic scenario: You're building a customer 360 view. You have a CRM, a billing system, a support ticketing platform, an e-commerce database, and a legacy on-premise system from 2009 that nobody fully understands but that still has relevant data. Records might exist in one system, several systems, or all five. Some systems have internal duplicates. Across systems, the same customer appears under different names, emails, and IDs. You need to figure out who is who — and keep that knowledge current as new records arrive.

What makes it the hardest of the three: Entity resolution is the combination of deduplication and record linkage, applied simultaneously across an arbitrary number of sources, with the additional requirement of producing durable, reliable entity identifiers — IDs that remain stable over time as new records arrive and existing records change. It also typically involves the cluster formation problem: if record A matches B and B matches C, are A and C the same entity even if they don't directly match? Getting transitivity right without creating false chains is non-trivial.

Why the terminology is so confused: Entity resolution is known by at least a dozen names depending on the industry and era: data matching, fuzzy matching, data linkage, profile unification, match-merge, record deduplication. They all describe overlapping aspects of the same underlying problem. The variation reflects the fact that different industries encountered the problem independently and named it after their specific use case.

In Zingg: Entity resolution is what Zingg was built for. The framework handles deduplication (match) and cross-source linkage (link) as distinct phases that can be composed. The output of both phases is a z_cluster ID — a stable identifier that groups all records determined to belong to the same real-world entity, regardless of which source they came from. Zingg also supports incremental data — adding new records without reprocessing everything — which is essential for entity resolution in production, where data is always arriving.

The active learning loop is what makes Zingg's entity resolution practical without a large pre-labeled dataset: you label a few hundred record pairs as match / no match / can't say, Zingg trains on those labels and builds a model, and then you run match or link on the full dataset. You can also combine multiple match models for datasets where different entity types need to be matched with different logic.

When someone says "we need to deduplicate / link / resolve our data", here's a quick way to figure out which problem you're actually solving:

Is the data all in one source or multiple?

Are the individual sources already clean (no internal duplicates)?

Do you need a durable, stable entity ID that survives incremental data loads?

Is the problem bounded (one-time project) or ongoing (new data arrives continuously)?

In practice, most real data problems that people describe as "deduplication" turn out to be entity resolution once you look closely enough — because the data is never just in one place, the sources are never entirely clean, and the business needs a result that stays accurate over time, not just once.

This is why the distinction matters: if you scope a project as "deduplicate the CRM," you'll build a one-time batch job that solves a fraction of the problem. If you scope it as entity resolution across your customer data sources, you build something that actually holds up.

Zingg is designed to handle all three problem types — deduplication, record linkage, and full entity resolution — with the same underlying model and the same configuration format. The step-by-step guide covers setting up each phase, and the field definitions reference explains how to configure match types for different kinds of data.

Zingg is open source. You can find us on GitHub, browse the documentation, or join the conversation on Slack.